AI Validation in GxP

Why CSV Is No Longer Sufficient

In the pharmaceutical industry, GxP principles are based on system prerequisites that assume reproducible “passed” or “not-passed” test results and static system definitions. Not only that, classic Computer System Validation (CSV), a central building block of regulatory evidence in the industry, is also based on these assumptions. This framework has worked well in the past, regardless of numerous digital and technological innovations. However, with the rise of AI, the equation no longer holds true.

The reason is that AI systems are, by definition, non-deterministic systems. This means that the same input can and, with high probability, will lead to varying output, as is always the case with learning systems. Can CSV keep pace with this dynamic and ensure the safety and reliability that are a conditio sine qua non in the pharmaceutical industry? The good news is that it can. However, this is only possible if it evolves beyond the current black-and-white patterns.

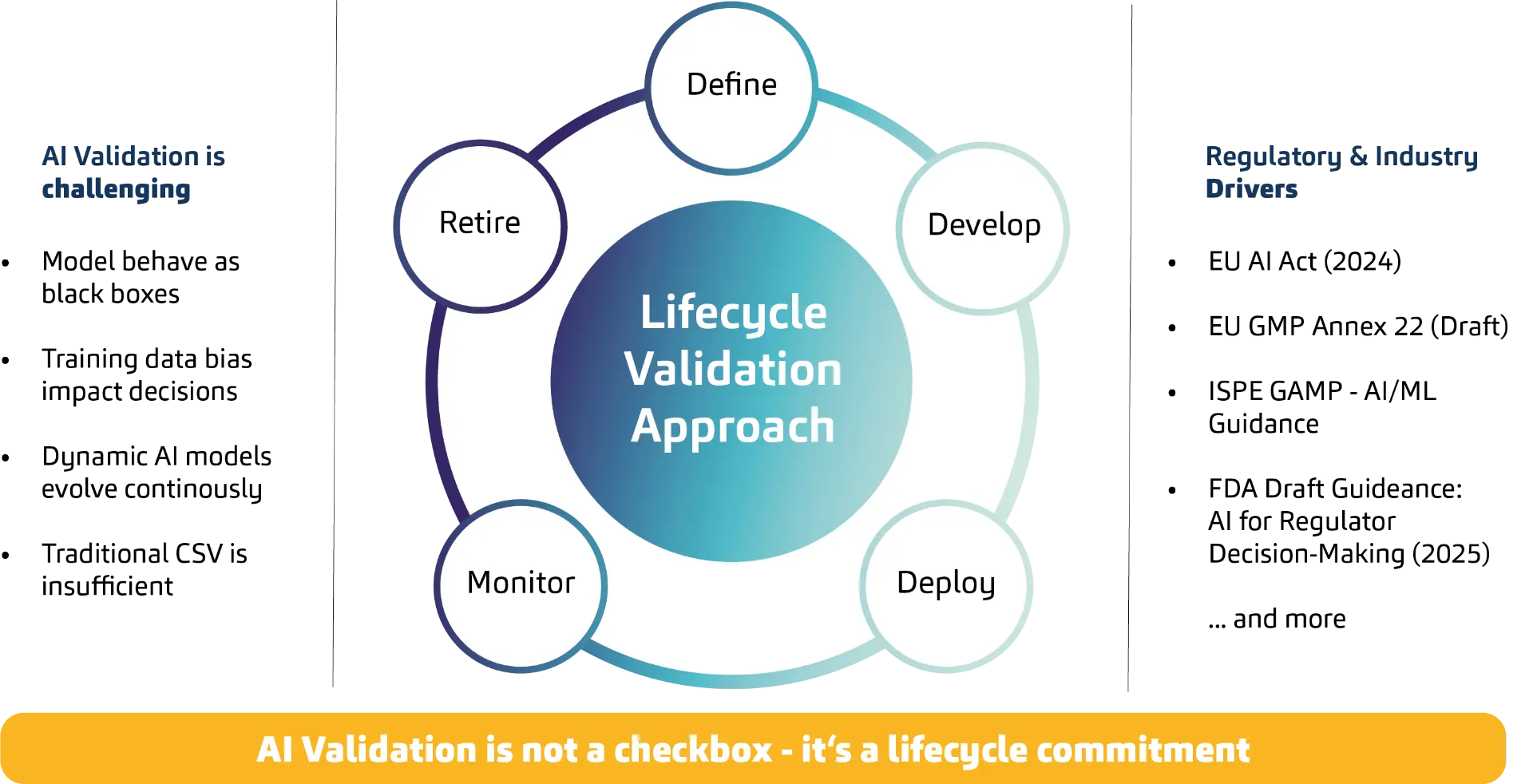

AI Validation requires a lifecycle approach

Broadly speaking, the task is to expand the perspective in AI validation from a point-in-time model to an inherent lifecycle responsibility. Classic IT validation follows a defined process: define the system state, test requirements, close deviations, approve, grant release, ready. AI breaks this logic because output and behavior are not derived solely from a defined system state, but from a data-driven model and its context of use. Outputs are probabilistic, not binary. Because models are data-driven, retraining, drift, and changes in data distributions over time directly affect the outcome.

Pharma isn’t new to uncertainty, AI is different

Interestingly, this profound change is not beyond the pharmaceutical industry’s horizon of imagination, on the contrary. Pharmaceutical products, such as tablets, are close to deterministic behavior - here, GxP approaches have, over decades, ensured an almost breathtaking level of perfection. But at the same time, these products are also part of a “pharmacological system.” In it, diseases, individual patients, environmental conditions, and pharmacological products interact in a non-trivial and, above all, non-deterministic way.

Additionally, from a completely different perspective, cloud systems are also non-deterministic for a pharmaceutical manufacturer because the supplier implements changes without the user being able to control their content and impact. After an update, the system is functional or not, a different one. From the software supplier’s point of view, it can be deterministic because changes are known.

In contrast to these two scenarios, however, the “probabilistic momentum” in AI is a direct consequence of learning i.e., a system principle. Still, the example makes clear that completely deterministic systems are more of a theoretical construct and that the industry is already capable of dealing with probabilities in a GxP-compliant manner.

And finally, the comparison to the MedTech industry is also instructive, given the related topics and regulatory frameworks. Here, AI already supports decisions of the highest relevance for patients’ lives, for example in skin cancer detection or breast cancer diagnostics. Such systems are (strictly speaking) also non-deterministic, are trained on large data sets, and develop over time. And they, too, must be validated. In short: CSV can, must, and will hold in the AI era. But anyone who treats AI with deterministic test patterns creates a false sense of security or inflates the effort without addressing the central risks.

What changes in validation for AI

AI brings topics that are rather unfamiliar to classic CSV and are now moving to the foreground. To make the nature of the change clear, the following aspects can be highlighted as examples. Because quality results not only from the system state, but from model, data, and behavior over time, systems behave like black boxes. This requires a strong focus on explainability and the establishment of robust human-in-the-loop control mechanisms. Another aspect is that bias in training data can affect results and must therefore be treated as a quality and risk topic. Added to this is change over time: retraining, drift, and changes to data pipelines influence model behavior. That can be positive but only if the drift runs in the right direction and remains controlled.

The problem that already surfaced in the cloud example becomes apparent here as well: controllability and evidence arise through governance, monitoring, and controlled changes. In dynamic, probabilistic behavior, this can only succeed if validation is, going forward, understood as real-time validation in the sense of a convergence of testing and monitoring; if classic pass/fail validation logic develops toward statistical assurance and scenario coverage; and if system validation becomes the validation of an ecosystem of data, model, and context of use. Ultimately, using AI in a GxP context means assuming responsibility for the system’s lifecycle and assuming the possibility of permanent changes.

A practical, lifecycle-based validation approach

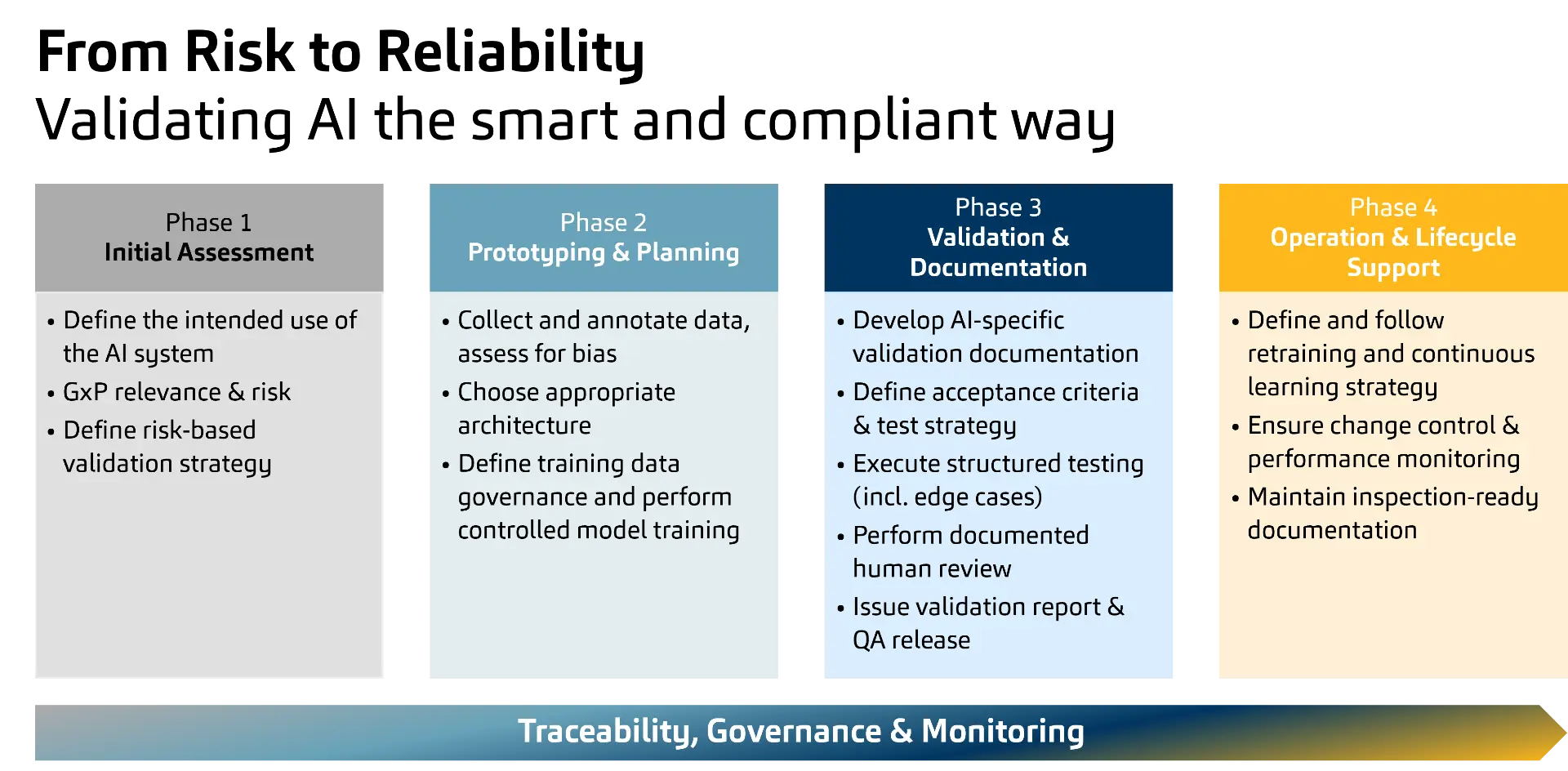

Against this background, the concrete question arises which AI use cases should be used in GxP and how the validation and operating framework is designed so that it remains auditable and at the same time controls the dynamics of AI. Practically, this can be structured in four steps that lead to a future-proof, lifecycle-oriented validation approach.

Four-Phase AI Approach

Regulators are moving to lifecycle expectations

That the facts outlined do not reflect a theoretical discussion in an ivory tower is shown by a look at the most important regulatory developments of recent years. The EU AI Act defines an “AI System” as a machine-based system that is operated with varying degrees of autonomy, can show adaptiveness after deployment, and derives from input how it generates outputs such as predictions, content, recommendations, or decisions. Guidelines in parallel emphasize the lifecycle principle, including EU GMP Annex 22 (Draft), ISPE GAMP AI/ML Guidance, and current FDA discussions. As early as 2019, the FDA addressed in its proposed framework for modifications to AI/ML-based SaMD that classic approval logics assume static systems, while AI/ML can be adaptive systems. Building on that, the FDA, Health Canada, and the MHRA emphasize in the Good Machine Learning Practices (2021) that models must be monitored over the lifecycle and changes must be controlled and documented.

The road ahead

Against this background, engaging with the shift that AI brings to validation practice is not a theoretical exercise but rather both a business and a regulatory imperative. The risk is not AI use, but AI use without a validation approach that treats non-deterministic behavior, lifecycle changes, and data dependency as the core of quality assurance.

Authors

Would you like to learn more about this topic or discuss individual challenges?

Our contacta are available for a personal consultation.